Geeking in technology since 1985, with IBM Development, focused upon Docker and Kubernetes on the IBM Z LinuxONE platform In the words of Dr Cathy Ryan, "If you don't write it down, it never happened". To paraphrase one of my clients, "Every day is a school day". I do, I learn, I share. The postings on this site are my own and don’t necessarily represent IBM’s positions, strategies or opinions. Remember, YMMV https://infosec.exchange/@davehay

Saturday, 30 December 2023

Getting started with Python in Jupyter Notebooks

Saturday, 4 November 2023

More on macOS SMB sharing

Monday, 23 October 2023

Why I can't install jq on Ubuntu 20.04

Distributor ID: Ubuntu

Description: Ubuntu 20.04.6 LTS

Release: 20.04

Codename: focal

Building dependency tree

Reading state information... Done

E: Unable to locate package jq

# newer versions of the distribution.

deb http://us.archive.ubuntu.com/ubuntu focal main

# deb-src http://us.archive.ubuntu.com/ubuntu focal main restricted

## Major bug fix updates produced after the final release of the

## distribution.

deb http://us.archive.ubuntu.com/ubuntu focal-updates main

# deb-src http://us.archive.ubuntu.com/ubuntu focal-updates main restricted

## N.B. software from this repository is ENTIRELY UNSUPPORTED by the Ubuntu

## team. Also, please note that software in universe WILL NOT receive any

## review or updates from the Ubuntu security team.

# deb-src http://us.archive.ubuntu.com/ubuntu focal universe

# deb-src http://us.archive.ubuntu.com/ubuntu focal-updates universe

## N.B. software from this repository is ENTIRELY UNSUPPORTED by the Ubuntu

## team, and may not be under a free licence. Please satisfy yourself as to

## your rights to use the software. Also, please note that software in

## multiverse WILL NOT receive any review or updates from the Ubuntu

## security team.

# deb-src http://us.archive.ubuntu.com/ubuntu focal multiverse

# deb-src http://us.archive.ubuntu.com/ubuntu focal-updates multiverse

## N.B. software from this repository may not have been tested as

## extensively as that contained in the main release, although it includes

## newer versions of some applications which may provide useful features.

## Also, please note that software in backports WILL NOT receive any review

## or updates from the Ubuntu security team.

deb http://us.archive.ubuntu.com/ubuntu focal-backports main

# deb-src http://us.archive.ubuntu.com/ubuntu focal-backports main restricted universe multiverse

## Uncomment the following two lines to add software from Canonical's

## 'partner' repository.

## This software is not part of Ubuntu, but is offered by Canonical and the

## respective vendors as a service to Ubuntu users.

# deb http://archive.canonical.com/ubuntu focal partner

# deb-src http://archive.canonical.com/ubuntu focal partner

deb http://us.archive.ubuntu.com/ubuntu focal-security main

# deb-src http://us.archive.ubuntu.com/ubuntu focal-security main restricted

# deb-src http://us.archive.ubuntu.com/ubuntu focal-security universe

# deb-src http://us.archive.ubuntu.com/ubuntu focal-security multiverse

# deb-src http://us.archive.ubuntu.com/ubuntu focal universe

# deb-src http://us.archive.ubuntu.com/ubuntu focal-updates universe

# deb-src http://us.archive.ubuntu.com/ubuntu focal-backports main restricted universe multiverse

# deb-src http://us.archive.ubuntu.com/ubuntu focal-security universe

deb http://us.archive.ubuntu.com/ubuntu focal universe

deb http://us.archive.ubuntu.com/ubuntu focal-updates universe

deb http://us.archive.ubuntu.com/ubuntu focal-security universe

EOF

and tried again: -

Building dependency tree

Reading state information... Done

The following additional packages will be installed:

libjq1 libonig5

The following NEW packages will be installed:

jq libjq1 libonig5

0 upgraded, 3 newly installed, 0 to remove and 0 not upgraded.

Need to get 313 kB of archives.

After this operation, 1,062 kB of additional disk space will be used.

Get:1 http://us.archive.ubuntu.com/ubuntu focal/universe amd64 libonig5 amd64 6.9.4-1 [142 kB]

Get:2 http://us.archive.ubuntu.com/ubuntu focal-updates/universe amd64 libjq1 amd64 1.6-1ubuntu0.20.04.1 [121 kB]

Get:3 http://us.archive.ubuntu.com/ubuntu focal-updates/universe amd64 jq amd64 1.6-1ubuntu0.20.04.1 [50.2 kB]

Fetched 313 kB in 1s (440 kB/s)

Selecting previously unselected package libonig5:amd64.

(Reading database ... 108600 files and directories currently installed.)

Preparing to unpack .../libonig5_6.9.4-1_amd64.deb ...

Unpacking libonig5:amd64 (6.9.4-1) ...

Selecting previously unselected package libjq1:amd64.

Preparing to unpack .../libjq1_1.6-1ubuntu0.20.04.1_amd64.deb ...

Unpacking libjq1:amd64 (1.6-1ubuntu0.20.04.1) ...

Selecting previously unselected package jq.

Preparing to unpack .../jq_1.6-1ubuntu0.20.04.1_amd64.deb ...

Unpacking jq (1.6-1ubuntu0.20.04.1) ...

Setting up libonig5:amd64 (6.9.4-1) ...

Setting up libjq1:amd64 (1.6-1ubuntu0.20.04.1) ...

Setting up jq (1.6-1ubuntu0.20.04.1) ...

Processing triggers for man-db (2.9.1-1) ...

Processing triggers for libc-bin (2.31-0ubuntu9.12) ...

jq-1.6

"friends": [

{

"givenName": "Dave",

"familyName": "Hay"

},

{

"givenName": "Homer",

"familyName": "Simpson"

},

{

"givenName": "Marge",

"familyName": "Simpson"

},

{

"givenName": "Lisa",

"familyName": "Simpson"

},

{

"givenName": "Bart",

"familyName": "Simpson"

}

]

}

Thursday, 15 June 2023

macOS to macOS File Sharing - Don't work, try The IT Crowd

I use File Sharing between two Macs on the same network, both running the latest macOS 13.4 Ventura.

For some strange reason I wasn't able to access one Mac - a Mini - from the other - a MacBook Pro.

As is always the case, the internet solved it for me: -

Fix File Sharing Not Working in MacOS Ventura

TL;DR; turn it off, and on again

Yes, The IT Crowd strikes again

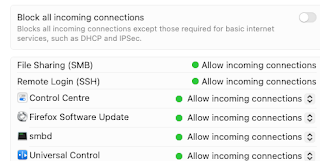

Having said that, Dan Moren, he of Six Colours, acclaimed author AND MacBreak Weekly host, deserves full thanks for directing me to macOS' built-in firewall tool: -

/usr/libexec/ApplicationFirewall/socketfilterfw --listapps

Solving a file sharing mystery: Why one Mac can’t see another

Whilst the TIOAOA trick worked this time, who knows what I'll need next time ...

Monday, 22 May 2023

On the subject of aliases ...

(a) typing more stuff

(b) looking in my notes to remember what I need, in order to type more stuff

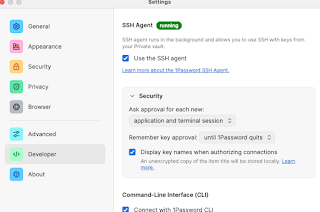

Using 1Password to store API keys ...

Following on from my earlier post: -

Wow, why have I not been using 1Password for my SSH keys before today ?

I've got a little further, with various API keys now stored in my 1Password vault

This is far simpler, in that the vault entry, of type API Credential, only needs to have a name/title e.g. IBM Cloud API Key and a credential, the actual API key itself.

With that in place, I've then setup an alias to retrieve/display the API key: -

apikey='export APIKEY=$(op item get "IBM Cloud" --field credential) && echo $APIKEY'

in ~/.zprofile, meaning that I just need to run the "command" apikey to ... see my API key.

I will, of course, be leveraging the same API keys in various other scripts/aliases, including things that login to IBM Cloud etc.

Saturday, 20 May 2023

Wow, why have I not been using 1Password for my SSH keys before today ?

Friday, 19 May 2023

Today I Learned - how to deal with Shell Check SC2086

So, technically I learned this yesterday but 🤷♀️

As part of our CI/CD testing, we run shellcheck against our shell scripts, and saw the following: -

^----^ SC2086 (info): Double quote to prevent globbing and word splitting.

for a piece of code that referenced a variable e.g. : -

echo $FILES

The shellcheck Wiki covers this: -

and suggests that $FILES be wrapped in double quotes e.g. : -

echo "$FILES"

So far, so good

However, the code in question was actually a variable containing more than one element e.g. : -

FILES="a.txt b.txt c.txt"

so the next line in the script which leveraged the values within the $FILES variable: -

ls "$FILES"

fails with: -

ls: a.txt b.txt c.txt: No such file or directory

Thinking more about this, this kinda made sense i.e. we're treating the values within the $FILES variable as elements within an array, but we're not actually treating the variable as an array, by incrementing through the elements by an index.

The Wiki does reference this: -

Using that as inspiration, I updated the script: -

ls "${files[@]}"

In essence, this is creating a "real" array from the $FILES variable, and then we're incrementing the index using [@]

To be clear, I also took inspiration from: -

How to be explicit about intentional word splitting?

and this is my demo / test script: -

# Set variable

FILES="a.txt b.txt c.txt"

echo "Works, but breaks shellcheck"

ls $FILES

echo "Fails, but passes shellcheck"

ls "$FILES"

echo "Works, and passes shellcheck"

read -ra files <<<"${FILES}"

ls "${files[@]}"

which, when I run it, does this: -

./test-sc.sh

Works, but breaks shellcheck

a.txt b.txt c.txt

Fails, but passes shellcheck

ls: a.txt b.txt c.txt: No such file or directory

Works, and passes shellcheck

a.txt b.txt c.txt

Finally, for now, there's a great shellcheck plugin for VS Code: -

ShellCheck for Visual Studio Code

and, for the record, the shellcheck project is available on GitHub

Thursday, 27 April 2023

Why oh why did I forget vimdiff ?

Whilst trying to compare two branches of a GitHub repo on my Mac, I was using diff to compare/contrast specific files, and trying to parse the differences.

And then I remembered vimdiff

Source: Linux `Vimdiff` Command – How to Compare Two Files in the Command Line

PS Using freeCodeCamp for the above image, as I don't want to reveal my sources ( i.e. source code )

Unix - redirecting output to /dev/null

In the past, I've used redirection to send output to /dev/null such as: -

foobar 2> /dev/null

where foobar is a non-existent command/binary, but I want the error output ( stderr ) such as: -

zsh: command not found: foobar

or: -

foobar: command not found

to be "hidden"

Similarly, I've used redirection to send "pukka" output ( stdout ) to also go to /dev/null e.g. : -

uptime 1> /dev/null

However, I'd not seen the simple way to do both in one fell swoop: -

foobar &> /dev/null

uptime &> /dev/null

where the ampersand ( & ) is used to send BOTH stdout and stderr to /dev/null

As ever, which is nice

IBM Container Registry - searching and formatting

So, when querying images that have been pushed to a namespace within IBM Container Registry, one can format the output to only return certain columns such as repository (image) name and tag.

Who knew ?

Well, the authors of the documentation did, apparently :-)

Formatting and filtering the CLI output

For example: -

ibmcloud cr images --format "{{ .Repository }}:{{ .Tag }}"

icr.io/hello/hello_world:1.1

icr.io/hello/hello_world:1.2

icr.io/hello/hello_world:latest

etc.

Other examples, from the doc, include: -

ibmcloud cr image-list --format "{{ if gt .Size 1000000 }}{{ .Repository }}:{{ .Tag }} {{ .SecurityStatus.Status }}{{end}}"

ibmcloud cr image-digests --format '{{if not .Tags}}{{.Repository}}@{{.Digest}}{{end}}'

ibmcloud cr image-inspect ibmliberty --format "{{ .ContainerConfig.Labels }}"

etc.

Which is nice!

*UPDATE*

And, bringing two posts together, I can report the created date AND format it from Epoch time: -

ic cr images --format "{{ .Repository }}:{{ .Tag }}:{{ .Created }}" | awk 'BEGIN { FS = ":"} ; {$3 = strftime("%c", $3)} 1'

Friday, 21 April 2023

Today I Learned - Munging Epochs using awk

So today I had a requirement to convert some Epoch-formatted dates, located in a CSV file, into human-readable dates...

So today I learned about awk vs. gawk, and the strftime() function ...

I also learned that awk on macOS isn't the same as "real" Gnu awk ( aka gawk ), hence the need for gawk ...

I started by installing gawk: -

brew install gawk

and then updated my PATH to reflect it: -

PATH="/opt/homebrew/opt/gawk/libexec/gnubin:$PATH"

Using an example of my data: -

cat file.txt

1681990787

1681992853

1681712949

which is WAY simpler than my real data, I was then able to munge it using awk ( or, really, gawk ) : -

awk 'BEGIN { FS = ","} ; {$1 = strftime("%c", $1)} 1' file.txt

which returns: -

Thu 20 Apr 12:39:47 2023

Thu 20 Apr 13:14:13 2023

Mon 17 Apr 07:29:09 2023

I'd previously done much the same using Excel, via a formula: -

=F17/86400+DATE(1970,1,1)

where cell F17 contained the Epoch-formatted date

But scripts are so much more fun ...

Monday, 17 April 2023

IBM Cloud and JQ - more querying fun

A colleague laid down a challenge - well, he didn't actually lay it down, he merely posted a single-line script that used awk and sed and grep - so I decided to build a better mousetrap ...

The requirement ... to query one's IBM Cloud account for Kubernetes (K8s) clusters, in this case leveraging the IBM Kubernetes Service (IKS) offering, and report the cluster name and the flavour ( flavor to our US friends )

Here is up with what I ended: -

for i in $(ic cs cluster ls --provider vpc-gen2 --output JSON | jq -r '.[].name'); do echo "Cluster Name:" $i; echo -n "Flavor: "; ic cs workers --cluster $i --output JSON | jq -r '.[].flavor'; done

which resulted in: -

Cluster Name: davehay-14042023

Flavor: bx2.4x16

Cluster Name: davehay-15042023

Flavor: bx2.4x16

In essence, I list the clusters in the account ( across all regions ), specifically those using the Virtual Private Cloud Generation 2 ( vpc-gen2 ) provider, grab and output the name, and then use the name to inspect the cluster and report on it's flavor ( sic ).

Rather than using awk and sed and grep etc. I chose to use jq, working on the assumption that all of our engineers will have that installed as it's a ubiquitous tool these days.

Tuesday, 4 April 2023

IBM Cloud CLI - Debugging

- IBMCLOUD_API_KEY

- IBMCLOUD_TRACE

- IBMCLOUD_COLOR

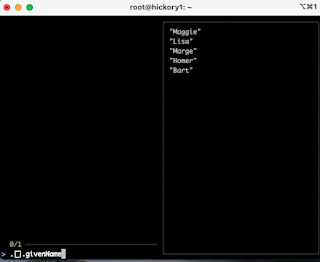

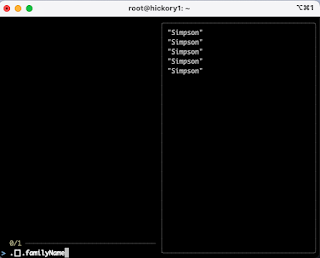

TIL: FuzzyFinder - fzf - and jq

Today I learned ( well, actually it was yesterday but who's counting days ? ) about fzf in the context of using it to test JQ expressions.

The revelation came from Julia Evans, author of WizardZines, aka b0rk, about whom I've written before.

She'd mentioned the use of fzf and jq in a post on Mastodon: -

so I had to try it out ...

Install fzf

brew install fzf

Create a JSON document

[

{

"givenName": "Maggie",

"familyName": "Simpson"

},

{

"givenName": "Lisa",

"familyName": "Simpson"

},

{

"givenName": "Marge",

"familyName": "Simpson"

},

{

"givenName": "Homer",

"familyName": "Simpson"

},

{

"givenName": "Bart",

"familyName": "Simpson"

}

]

EOF

Fire up fzf

echo '' | fzf --preview 'jq {q} < the_simpsons.json'

Tinker with various jq queries

Tuesday, 21 February 2023

Reading up on the differences between Zsh and Bash

Typically, when writing self-documenting scripts, especially those used during a demonstration, I'd add a command such as: -

read -p "Press [Enter] to continue"

However, using Zsh on macOS 13.2.1 Ventura, I saw: -

read: -p: no coprocess

If I skipped the -p I saw: -

zsh: not an identifier: Press [Enter] to continue

when I actually pressed the Enter key

As ever, the internet answered my cry for help

ZSH: Read command fails within bash function "read:1: -p: no coprocess"

So now I have this: -

ibmcloud cs cluster ls

read "?Press [Enter] to continue"

Monday, 6 February 2023

Fun and games with Docker login on macOS

I've been around the houses with Docker Desktop, Podman and Docker via Homebrew on my Mac over the past few months

I saw something curious this morning, whilst trying to log into IBM Container Registry

ic cr login

Logging 'docker' in to 'uk.icr.io'...

FAILED

Failed to 'docker login' to 'uk.icr.io' with error: Error saving credentials: error storing credentials - err: docker-credential-desktop resolves to executable in current directory (./docker-credential-desktop), out: ``

I then tried the same auth process using docker login

docker login --username iamapikey uk.icr.io

but with the same effect: -

Password:

Error saving credentials: error storing credentials - err: docker-credential-desktop resolves to executable in current directory (./docker-credential-desktop), out: ``

In case I was missing something, I even tried the Docker Creds Helper: -

brew install docker-credential-helper

but to no avail.

Finally, in desperation, I nuked my Docker credentials file - which is a TERRIBLE thing imho

rm ~/.docker/config.json

and tried again: -

docker login --username iamapikey uk.icr.io

Password:

Login Succeeded

Even better, now that I'd installed the creds helper - which caches the creds in the macOS Keychain, the config.json is somewhat cleaner: -

cat ~/.docker/config.json

{

"auths": {

"uk.icr.io": {}

},

"credsStore": "osxkeychain"

}

Better still, the IBM Cloud CLI is also happy: -

ic cr login

Logging 'docker' in to 'uk.icr.io'...

Logged in to 'uk.icr.io'.

OK

Thursday, 2 February 2023

Removing filenames with special characters - a reminder

I've written this down somewhere before but ...

I have a file that I created, by mistake, by abusing the target during a scp command

ls -altrc

-rw-r--r-- 1 root root 2541 Feb 2 02:15 '~'

and I wanted to delete it - but it has weird characters in the file name

This to the rescue: -

rm -v -- '~'

removed '~'

Wednesday, 1 February 2023

Reminder to self - check out Finch

From this: -

Today we are happy to announce a new open source project, Finch. Finch is a new command line client for building, running, and publishing Linux containers. It provides for simple installation of a native macOS client, along with a curated set of de facto standard open source components including Lima, nerdctl, containerd, and BuildKit. With Finch, you can create and run containers locally, and build and publish Open Container Initiative (OCI) container images.

Introducing Finch: An Open Source Client for Container Development

a friend had recommended that I check out Finch, so it's definitely on my personal to-do list.

Monday, 16 January 2023

Go modules and forks

I'm tinkering with a Go project ( let's call it foobar ), which has a dependency upon yet another Go project ( let's call it snafu ) in the same organisation.

For the moment, I've got a fork of snafu in my own org - david-hay - and wanted to update go.mod in the foobar project to leverage it.

I knew I had to update go.mod either manually or use go mod edit --replace source=target but couldn't get past the exception: -

go: -replace=github.com/org/snafu=github.com/david-hay/snafu: unversioned new path must be local directory

It turned out, as ever, that I was holding it wrong ...

I needed to explicitly provide a "version" - in this context, that was a branch e.g. main at which to point the target during go mod edit --replace like this: -

go mod edit --replace github.com/org/snafu=github.com/david-hay/snafu@main

In other words, the right-hand side of the --replace directive needed to include four things: -

- domain e.g. github.com

- organization e.g. david-hay

- project/repo e.g. snafu

- branch e.g. main

like this: -

...

replace github.com/org/snafu => github.com/david-hay/snafu main

When I then ran go mod tidy, this went off to GitHub and pulled down the latest release from that branch in my fork: -

go: downloading github.com/david-hay/snafu v1.11.39-0.20230116121922-46267d910e38

and updated go.mod with: -

replace github.com/org/snafu => github.com/david-hay/snafu v1.11.39-0.20230116121922-46267d910e38

In other words, it replaced the branch with the release ... which is nice

With thanks to this: -

Pointing to a fork of a Go module

Friday, 13 January 2023

Fun with Git and branching

In very brief terms, I hit an issue last week where I'd created four branches in a repo, having cloned the main branch of the upstream repo.

Therefore, I'd done something like this: -

git clone -b main git@github.com:snafu/foobar@github.com

cd ~/foobar

git fetch origin && git rebase origin/main

to bring the main branch down to my Mac.

I'd then created my first branch: -

git branch dave1

git switch dave1

and added/changed some code, committed it, and pushed my new branch upstream.

I then went ahead and created a second branch: -

git branch dave2

git switch dave2

git fetch origin && git rebase origin/main

and again added/changed some code, committed it, and pushed the new branch upstream

All seemed fine ...

And I did the same for two more branches - dave3 and dave4 - with a PR for each branch being reviewed/approved and merged into main.

And then I found, when merging in separate Pull Request, that my changes from dave2 overwrote the changes made in dave1.

Which was weird....

A colleague helped explain ...

I see the 4 PRs were created from a shared branch instead of independently being created from main. That could explain the unexpected behavior where they kept rewriting each other.

He went onto explain how to avoid the issue ...

When you run git checkout -b branchname it creates a branch branching off of your current branch.

I am used to running git checkout main; git checkout -b branchname to ensure my branches are direct branches off of main. That helps rebase them based on other PRs merging to main.

which worked a treat

So, now I've learned this, and am trying hard to add this to my "muscle memory" ...

git clone -b main git@github.com:snafu/foobar@github.com

cd foobar

git fetch origin && git rebase origin/main

git checkout main; git checkout -b dave1

etc.

We shall see if that sticks ....

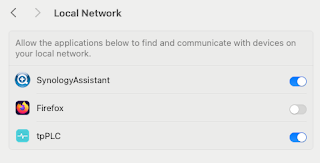

Note to self - Firefox and local connections

Whilst trying to hit my NAS from Firefox on my Mac, I kept seeing errors such as:- Unable to connect Firefox can’t establish a connection t...

-

Error "ldap_sasl_interactive_bind_s: Unknown authentication method (-6)" on a LDAPSearch command ...Whilst building my mega Connections / Domino / Portal / Quickr / Sametime / WCM environment recently, I was using the LDAPSearch command tha...

-

Another long story cut short, but I saw this: - curl: (58) unable to set private key file: 'dave.pem' type PEM from my Ansible...

-

Whilst trying to hit my NAS from Firefox on my Mac, I kept seeing errors such as:- Unable to connect Firefox can’t establish a connection t...